Intelligent Music Interfaces 2024: When Interactive Assistance and Adaptive Augmentation Meet Musical Instruments

You like musical instruments? Are you interested in using

interactive technologies to assist and augment musicians? Join our workshop at Augmented Humans (AHs) International Conference 2024! We are a hybrid workshop so we support both in-person and virtual participation!

Important Dates

27 February 2024: Submission Opens

15 March 20 March 2024: Submission Deadline

Submission site: EasyChair

19 March 24 March 2024: Review Notification

06 April 2024: Workshop (Hybrid)

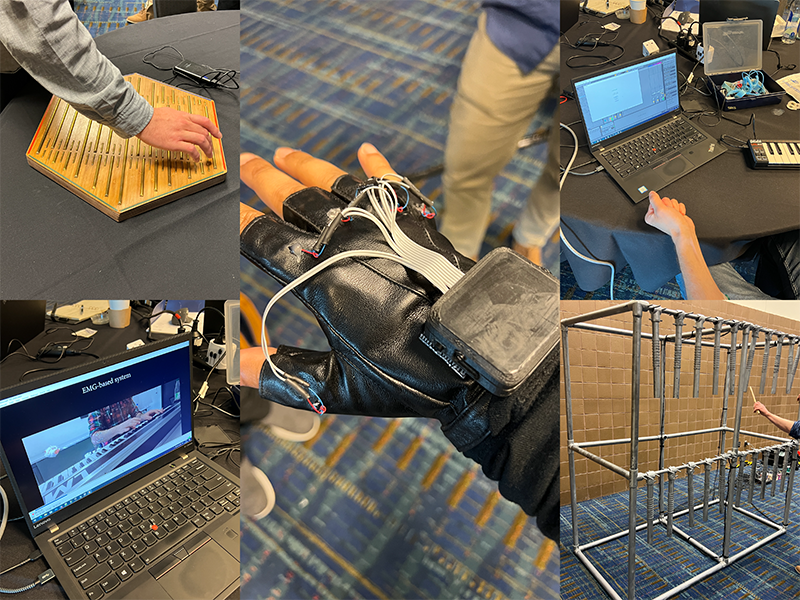

Some pictures from the previous workshop at CHI 2022.

Playing a musical instrument goes hand-in-hand with many benefits, such as positively impacting mental health or dexterity. Electronic elements have been integrated into traditional musical instruments in the early 1930s to create instruments, such as E-guitars, that offer new ways of music expression. Electric instruments evolved by combining networking and computational capabilities. These new capabilities can be leveraged to further broaden artists' expressiveness, enhance learning scenarios, allow remote collaboration of musicians, and even create entirely new musical instruments.

In this workshop, we will discuss and interact with intelligent music interfaces of any form. Novel music interfaces could be a new adaption of a traditional musical instrument, an interface for learning, or even supporting software. The workshop is planned to be held in person in conjunction with the Augmented Humans International Conference on April 6th in Melbourne, Australia.